Instructions

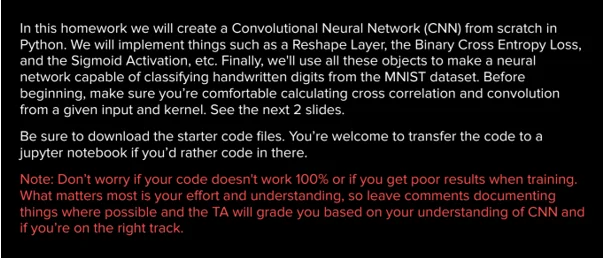

ObjectiveWrite a program to implement cross entropy in python.

Requirements and Specifications

Source Code

import numpy as np

from keras.datasets import mnist

from keras.utils import np_utils

from dense import Dense

from softmax import Softmax

from convolutional import Convolutional

from reshape import Reshape

from activations import Tanh, Sigmoid

from losses import binary_cross_entropy, binary_cross_entropy_prime

def preprocess_data(x, y, limit):

'''

Will limit our data since using the whole thing will take forever on a cpu especially since we're

implementing this from scratch.

'''

x = x.reshape(len(x), 1, 28, 28)

x = x.astype("float32") / 255

y = np_utils.to_categorical(y)

y = y.reshape(len(y), 10, 1)

return x[:limit], y[:limit]

# load MNIST from server, limit to 100 images per class since we're not training on GPU

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train, y_train = preprocess_data(x_train, y_train, 100)

x_test, y_test = preprocess_data(x_test, y_test, 100)

# TODO: Add our layers and the flow of input into this list.

network = [

Convolutional((1, 28, 28), 3, 5),

Sigmoid(),

Reshape((5, 26, 26), (5 * 26 * 26, 1)),

Dense(5 * 26 * 26, 100),

Sigmoid(),

Dense(100, 100),

Sigmoid(),

Dense(100, 10),

Softmax()

]

epochs = 100

learning_rate = 0.1

#train

for e in range(epochs):

error = 0

for x, y in zip(x_train, y_train):

# forward

output = x

for layer in network:

output = layer.forward(output)

# TODO: update our error

error += binary_cross_entropy(y,output)

grad = binary_cross_entropy_prime(y,output)

# TODO: perform back prop

for layer in reversed(network):

grad = layer.backward(grad,learning_rate)

error /= len(x_train)

print(f"{e + 1}/{epochs}, error={error}")

# TODO: run the test data through and print out your predictions

for x, y in zip(x_test, y_test):

output = x

for layer in network:

output = layer.forward(output)

print(f"y_pred: {np.argmax(output)}, y_true: {np.argmax(y)}")

Similar Samples

At Programming Homework Help, we offer a wide range of sample solutions to showcase our expertise in various programming languages. Our detailed and well-commented code examples serve as a testament to our commitment to quality and precision, helping you understand complex concepts effortlessly.

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python