Instructions

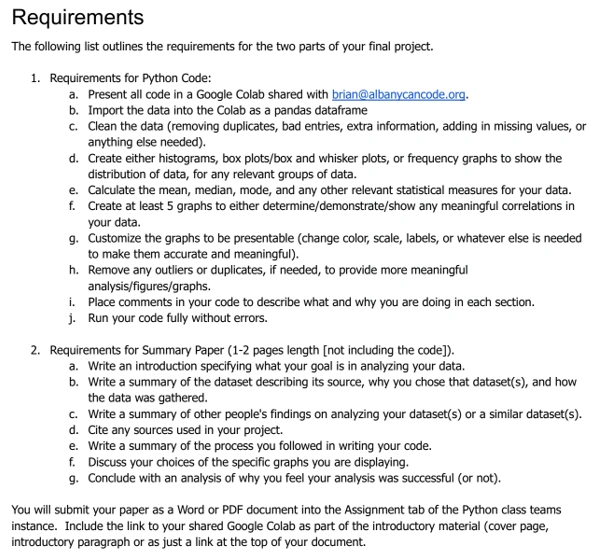

Requirements and Specifications

Source Code

# Required imports

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

# For this assignment, we will use the Titanic Dataset

The Titanic Dataset is a dataset containing samples about passengers in the Titanic. These samples are categorized by Sex, Ticket Class, Age, Name, etc., and a target variable called **Survived** with two possible values: 1 = Survived, 0 = Not Survived.

### 1. Load Dataset

data = pd.read_csv('train.csv')

## 2. Explore and describe the dataset.

### 2.a: See how the dataset is distributed

data.head()

### 2.b. Determine the frequency of values in columns for categorical values

In the next cell, we define inside a list the categorical columns. There are two categorical columns: 'Pclass' and 'Sex'. However, we consider that the Age is also important for this dataset, so we will categorize the samples based on the Age.

Let ***x*** be the age. The categories will be:

* 0 <= x <= 4: Baby (0)

* 5 <= x <= 12: Kid (1)

* 13 <= x <= 17: Teen (2)

* 18 <= x <= 59: Adult (3)

* 60 <= x: Senior (4)

We will also conver the 'Sex' columns from string to numeric values

* Male (0)

* Female (1)

The column 'Embarked' will also be converted to numerical values

* S (0)

* C (1)

* Q (2)

data.loc[(data.Age <= 4), 'AgeGroup'] = 0

data.loc[(data.Age >= 5) & (data.Age <= 12), 'AgeGroup'] = 1

data.loc[(data.Age >= 13) & (data.Age <= 17), 'AgeGroup'] = 2

data.loc[(data.Age >= 18) & (data.Age <= 59), 'AgeGroup'] = 3

data.loc[(data.Age >= 60), 'AgeGroup'] = 4

data.loc[data.Sex=='male', 'Sex'] = 0

data.loc[data.Sex == 'female', 'Sex'] = 1

data.loc[data.Embarked == 'S', 'Embarked'] = 0

data.loc[data.Embarked == 'C', 'Embarked'] = 1

data.loc[data.Embarked == 'Q', 'Embarked'] = 2

data.head()

categorical_columns = ['Pclass', 'Sex', 'AgeGroup']

fig, axes = plt.subplots(nrows = len(categorical_columns), ncols = 1, figsize=(10,10))

for i, col in enumerate(categorical_columns):

data.groupby([col])['Survived'].sum().plot.bar(ax=axes[i], grid=True)

axes[i].set_ylabel('No. of Survivors')

## 2.d. Outliers

To show the outliers, we can create a correlation plot that shows the relation between variables and how the changes in each one affect all others.

There are some non-numerical variables that are not needed for this example. Variables like 'Name', 'Ticket No.' does not affect if someoney survives or not.

These columns will be removed for the correlation plot

columns_to_ignore = ['PassengerId', 'Name', 'Ticket', 'Cabin', 'SibSp', 'Parch']

data2 = data.drop(columns = columns_to_ignore)

data2.head()

### Compute the correlation matrix

corrM = data2.corr()

sns.heatmap(corrM)

From the Correlation Map show above, we can see that there is a low relation between the Ticket Class and the chances of surviving. However, there is a higher correlation between the Age and the changes of Surviving, which means that the Age has a greater impact than the Ticket Class

## 2.e. Missing Points

We first count the number of missing points for each column

data.isnull().sum()

It can be seen that there are a lot of missing points for Cabin, and there are also missing points for Age and AgeGroup. The missing values in the Age variable affects the results for any kind of analysis because the Age column has a higher correlation with the target variable

One way of filling the values in rows is by just picking the previous value (previous row) and using that same value

data = data.ffill()

Now, count missing values again

data.isnull().sum()

# 3. Research your topic

### 3.a Find other analyses

[1] https://www.geeksforgeeks.org/python-titanic-data-eda-using-seaborn/#:~:text=Titanic%20Dataset%20%E2%80%93,given%20passenger%20survived%20or%20not.

[2] https://www.kaggle.com/startupsci/titanic-data-science-solutions

[3] https://www.analyticsvidhya.com/blog/2021/05/titanic-survivors-a-guide-for-your-first-data-science-project/

### 3.b. Describe the conclusions others have created

In the first link we see that an approach similar to mine was used for the analysis, where the correlation between the variables is studied and the number of survivors is studied for each of the categorical variables.

In [1] it was determined that the non-numeric columns are not important and that the 'Age' variable is very important for any type of analysis that you want to perform with this dataset.

In the second link [2], we see that they carried out a much more extensive and detailed analysis. In this case, each variable is studied separately, the incidence of each of the variables is determined, and it is even analyzed whether the surnames affected the fact that a person survived or not, which makes sense since at that time a good last name could secure you a spot on the life-saving ballot.

In reference [3], an analysis similar to that of [1] and [2] is performed, and a prediction model is also created. We see that the categorical variables are analyzed separately again and we see that the ticket number was even analyzed and how it affects the model

### 3.c: Define the target audicence of your analysis

The audience for this study are people interested in dataset analysis and regression and prediction models (machine learning). This type of dataset is well known for Machine learning models since they are easy to

# 4. Buid your analysis plan

### 4.a: What data do you need to solve your problem? Review your data exploration for that data

Any type of dataset with categorical variables are very useful to apply the analyzes shown in this work.

### 4.b: Do you need to clean or enhance the data to address outlier/missing values? List any new columns that need to be created.

Yes, datasets always require prior analysis and cleaning. If they have missing values, those data must be filled with previous values, previous values, or ultimately, the rows with those missing values must be eliminated.

On the other hand, there are a series of variables that must be categorized. For example, in our study we categorized ages into 5 groups. This process is very useful for other types of variables such as weight, muscle mass, etc.

### 4.c: Describe any techniques you use to answer the question.

The techniques used in the work are simple but effective. In the first instance, you must see how the dataset is composed: the column names, the type of value (numeric or non-numeric), the importance that each one represents (for example, the Name of the person is not important), etc.

Based on this, it is decided whether to drop columns, transform (categorize), normalize, etc.

### 4.d: Describe why you consider your answer successful.

According to my research, these steps are vital and are applied in 99.99% of the cases where this type of dataset is used, whether for linear regression, machine learning, etc. (See references [1], [2],

### 4.e: Describe the expectations you are trying to prove or disprove.

My interest is to verify that there are variables (columns) that are much more important than others. After performing the analysis, we want to prove that there are important factors that caused some people to be saved and others to die (in the case of the Titanic dataset). Variables such as age were of great impact in determining whether a person survived or not.

Related Samples

Explore Python Assignment Samples: Dive into our curated selection showcasing Python solutions across diverse topics. From basic algorithms to advanced data structures, each sample illustrates clear problem-solving strategies. Enhance your understanding and master Python programming with our comprehensive examples.

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python

Python